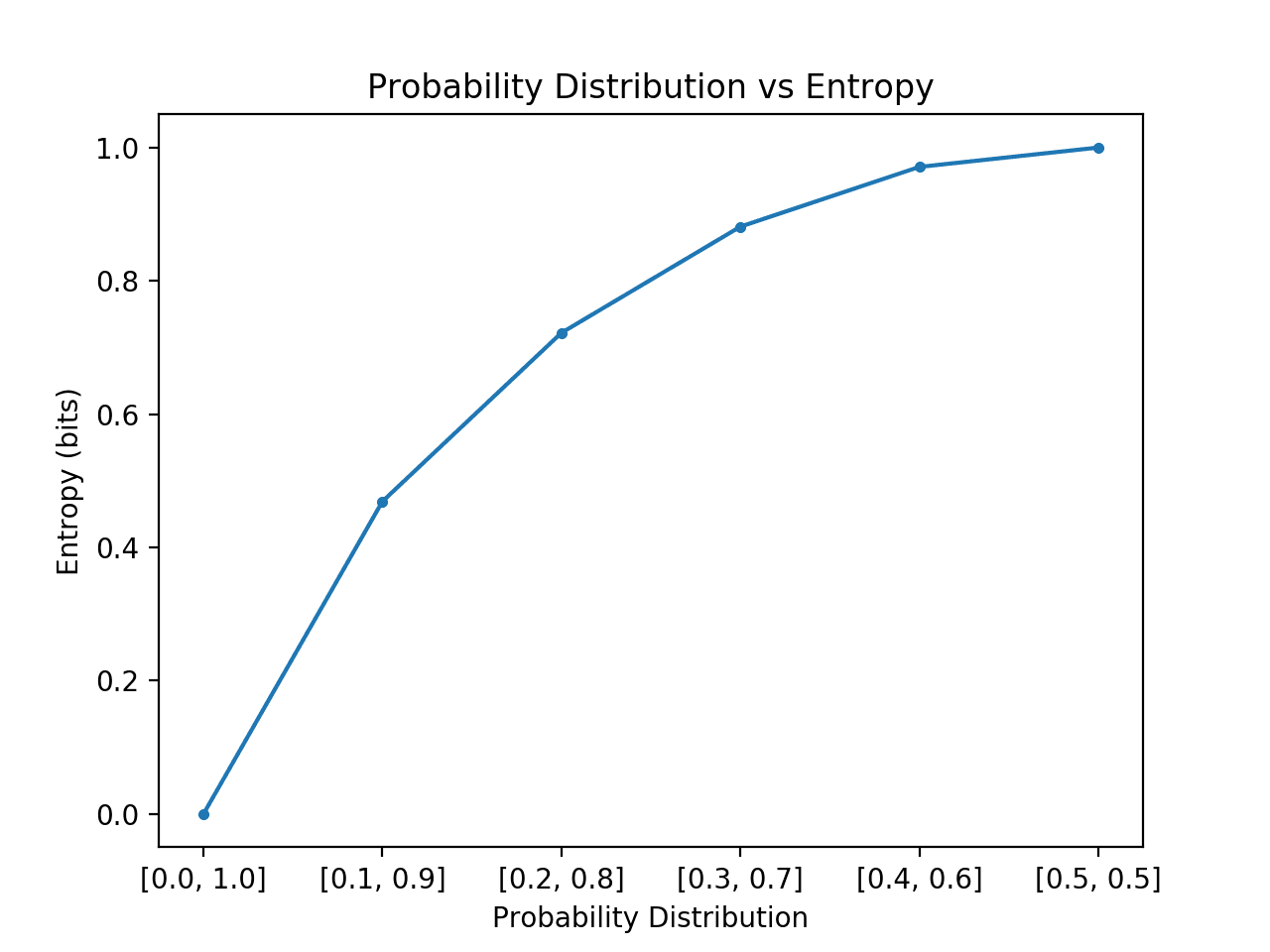

Thanks and sorry for the extensive questions. As far as I understand, the general entropy of a random variable $X$ should be the mean amount of self-information that every state of a random variable has.įor instance, if we have a variable with 4 states, $$ be approximated by an estimation of the probability based on the result of the experiments resulting from the execution of that random variable?.In that case, any amount of self-information for each state $v$ will give us the number of bits needed to efficiently transmit that state, under the assumption that, since all the states have the same probability, we should not "prioritize" one over the others.īut I do not understand how this holds for a random variable with a non-uniform probability distribution: why should $h(v) = -log_2 P(v)$ be the number of bits needed for exactly that state $v$ ? pA A / A.sum () Shannon2 -np.sum (pAnp.log2 (pA)) (2) Your probability distribution is continuous. Then you have to translate what appear to be relative frequencies to probabilities. (1) Your probability distribution is discrete. I do understand this when we are talking about variables with following a uniform probability distribution. There are essentially two cases and it is not clear from your sample which one applies here. I have read somewhere that given a random variable and considering the self-information of its states, any self-information amount will give us the minimal number of bits needed to encode the states. We define the amount of self information of a certain state of a random variable as: $h(v) = -log_2 P(v)$.Īs far I understand, Shannon arrived at this definition because it respected some intuitive properties (for instance, we want that states with the highest probability to give the least amount of information etc.).Programmers deal with a particular interpretation of entropy called programming complexity: learn more at our cyclomatic complexity calculator.I would like some clarifications on two points of Shannon's definition of entropy for a random variable and his notion of self-information of a state of the random variable. I understand that some, including the great Thomas Cover, prefer to define entropy, for pedagogical purposes. It reviews the axiomatic foundation upon which the notion of entropy is extracted. A logarithm to the basis of 2 is used here as the information is assumed to be coded. (4.4.6) H S h a n n o n ( a) j P ( a j) log 2 P ( a j). For any discrete random number that can take values a j with probabilities P ( a j), the Shannon entropy is defined as. I highly recommend reading chapter 2 of 'Information Theory' by Robert B. The concept of entropy has also been introduced into information theory. From an ecological point of view, it is best if the terrain is species-differentiated. \begingroup Actually, the Shannon's definition of entropy is not intuitive. The higher the entropy of your password, the harder it is to crack.Įcologists use entropy as a diversity measure. The concept of information entropy was introduced by Claude Shannon in his 1948 paper A Mathematical Theory of Communication,23 and is also referred to as. It takes into account the number of characters in your password and the pool of unique characters you can choose from (e.g., 26 lowercase characters, 36 alphanumeric characters). It's a measurement of how random a password is. You may also come across the phrase ' password entropy'. This index, which describes the probability of. In information theory, the entropy symbol is usually the capital Greek letter for ' eta' - H. Based on the local Shannon entropy concept in information theory, a new measure of aromaticity is introduced. It's said to have been chosen by Clausius in honor of Sadi Carnot (the father of thermodynamics). In physics and chemistry, the entropy symbol is a capital S. Before, it was known as "equivalence-value".

It comes from the Greek "en-" (inside) and "trope" (transformation). The term "entropy" was first introduced by Rudolf Clausius in 1865. Know you know how to calculate Shannon entropy on your own! Keep reading to find out some facts about entropy!

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed